Overview & Concepts¶

This page gives an overview about important concepts essential to understand when working with StreamMine3G such as:

- StreamMine3G Cluster

- Streams

- Operators

- Topologies

- Slices aka. Partitions

- Slice Deployment

- Partitioner

StreamMine3G Cluster¶

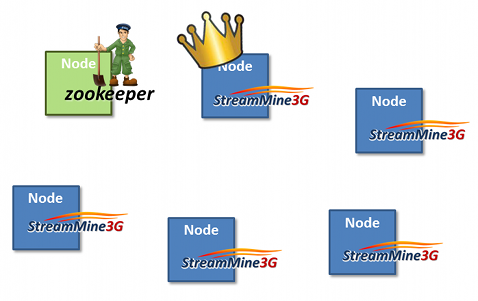

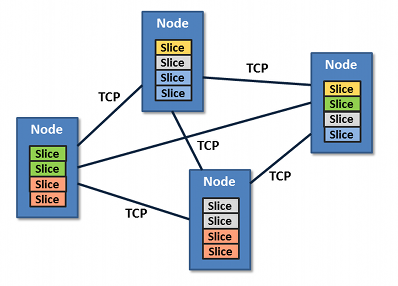

A StreamMine3G cluster consists of a set of nodes running StreamMine3G as well as zookeeper. Zookeeper is used to store the configuration of the StreamMine3G cluster such as what operators are currently deployed on the cluster etc. Using zookeeper has several advantages: Zookeeper uses replication to provide fault tolerance for the stored configuration as well as uses consensus to ensure consistency among its replicas.

Similar as in hadoop, StreamMine3G has a master node which is called the manager. However, in contrast to hadoop, any StreamMine3G node can be the manager. The manager is responsible to decide on what worker node operators should be deployed or un-deployed within the cluster. It can also trigger a migration of operators, i.e., moving an operator from one node to a new node if the original one was either over or underutilized.

In contrast to the master node, worker nodes in a StreamMine3G host solely operators and execute them, hence, they receive data from external data sources, do the actual processing and forward them to either downstream operators or to some external sink, e.g., a webpage, webservice or a database etc.

Streams¶

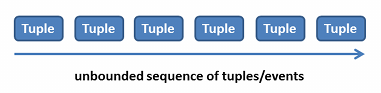

Streams in StreamMine3G are an unbounded sequence of tuples/events where a tuple/event can be any kind of data, e.g., data coming from temperature sensors, credit card transaction or call detail records to perform fraud detection.

Operators¶

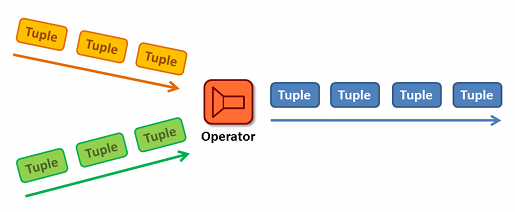

In order to process those events streams, operators are needed. Operators can preform any desired computation a user may have in mind. For example as in the following figure, the redish operator could perform a join of the yelloish and greenish event streams using some join key and emit resulting events to the redish stream.

To read more about how to use write operators for StreamMine3G using the Operator interface click here here.

Topologies¶

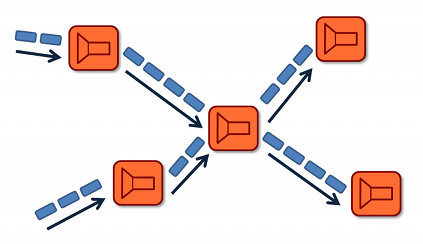

A topology in StreamMine3G describes which operators should receive which event streams, in other words, how they are connected to each other. As the Figure below shows, in StreamMine3G an operator can receive events from multiple upstream operators as well as send its result to multiple downstream operators.

Slices aka. Partitions¶

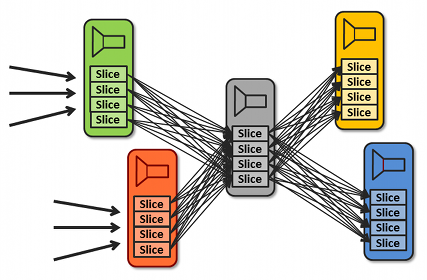

In order to make an event stream processing system such as StreamMine3G scalable, data needs to be partitioned. In StreamMine3G, those partitions are called slices. With data partitioning, parts of an operator can be put on individual nodes. Furthermore, operators/data can be migrated later on if a node has not sufficient load to free resources in a cloud environment or to shift load from an overloaded node to a less overloaded one. As shown in the Figure below, each of the operators consists of four slices, hence each of those slices can be potentially put on separate nodes.

Slice Deployment¶

In addition to slices which is an abstraction for an operator partition, StreamMine3G has nodes. A node in StreamMine3G can host an arbitrary number of slices of the same or even different operators. StreamMine3G allows the user to decide himself where to place operator slices, hence, as shown in the given example slice deployment, the two reddish operator slices are collocated with two greenish operator slices in the left most node of the Figure. Such a collocation is useful if for instance the reddish operator uses up a lot of CPU cycles where as the greenish one a lot of network bandwidth, to better utilize the overall resources of the nodes.

To read more about how to use deploy and migrate slices on a StreamMine3G cluster using the manager interface click here here.

Partitioner¶

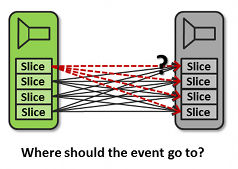

To partition the data across a given set of slices, such as four slices/partitions as shown in the below Figure, the user have to decide which events should go to which of the downstream slices. StreamMine3G offers a convenient Partitioner interface for that similar as in hadoop. By default, StreamMine3G uses a so called KeyRangePartitioner if no custom partitioner code has been provided.

To read more about how to use the Partitioner interface click here here.

What to do next? Try out the WordCount example...